Demystifying the Black Box

By 2026, AI is everywhere, but most kids (and, let's be honest, most adults) treat it like a magical oracle. AI for Oceans does the heavy lifting of demystifying that black box by turning the abstract concept of 'training data' into a simple game of 'Fish or Not Fish?'

What makes this work isn't just the cute graphics; it's the 'aha!' moment when the student realizes the computer is stupid. When they intentionally train the model with bad data—labeling a tire as a fish, for example—and then watch the computer confidently make the same mistake later, the lesson sticks better than any lecture ever could.

Why it matters right now

We are currently raising the first generation that will grow up alongside sophisticated LLMs and generative tools. If they don't understand that these tools are built on human-curated data, they're prone to believing whatever the machine spits out. This tutorial introduces the concept of algorithmic bias without using the scary academic jargon. It’s a foundational digital literacy skill.

How to use it

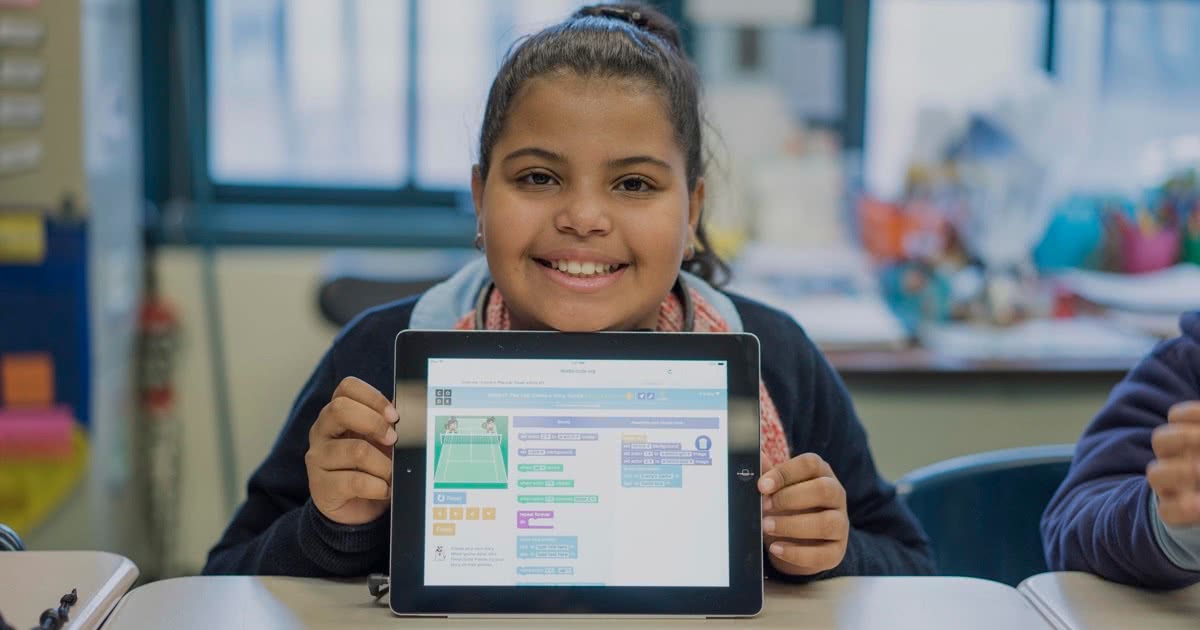

Don't just set them in front of the laptop and walk away. This is best used as a 'co-pilot' activity. Sit with them for the 45-60 minutes it takes to complete. When the model fails, ask them why. Code.org designed this for the classroom, so it's structured to provoke questions. If your kid likes this, they’ll likely be ready to move on to Scratch or more advanced Code.org modules.